Team name: Owen Derby and Brok Howard from Team VRML (Virtual Review Management Loop)

[The original VRML can be found here https://en.wikipedia.org/wiki/VRML – much respect to those champions who paved the way before us]

What Big AEC problem are you trying to solve?

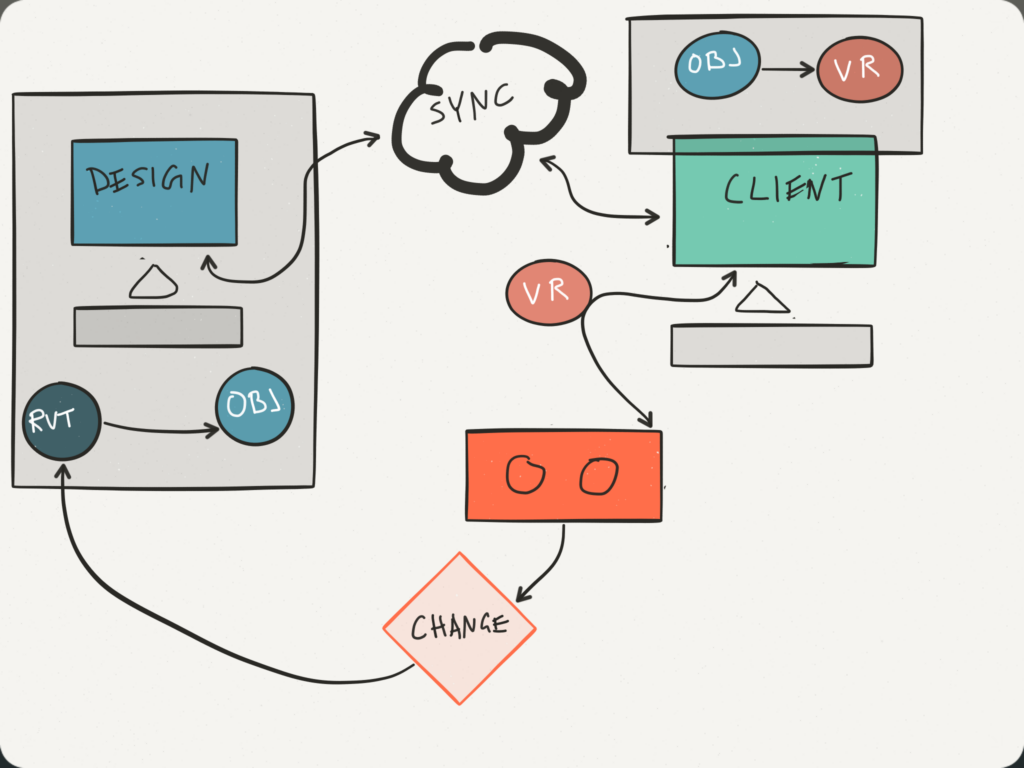

The design process includes change, lots of interactions and fast. Communication with clients can be a challenge when trying to include them in the process. In recent months the evolution of Vurtial Reality has started to get a stronger foothold in the AEC industry. We are not building games, but real human experiences in the AEC world. We do not experience this through the flat 2D drawings or high resolution renderings. The hardware is starting to catchup to the dream of Virtial experience prior to construction with advancements in heads up display gear.

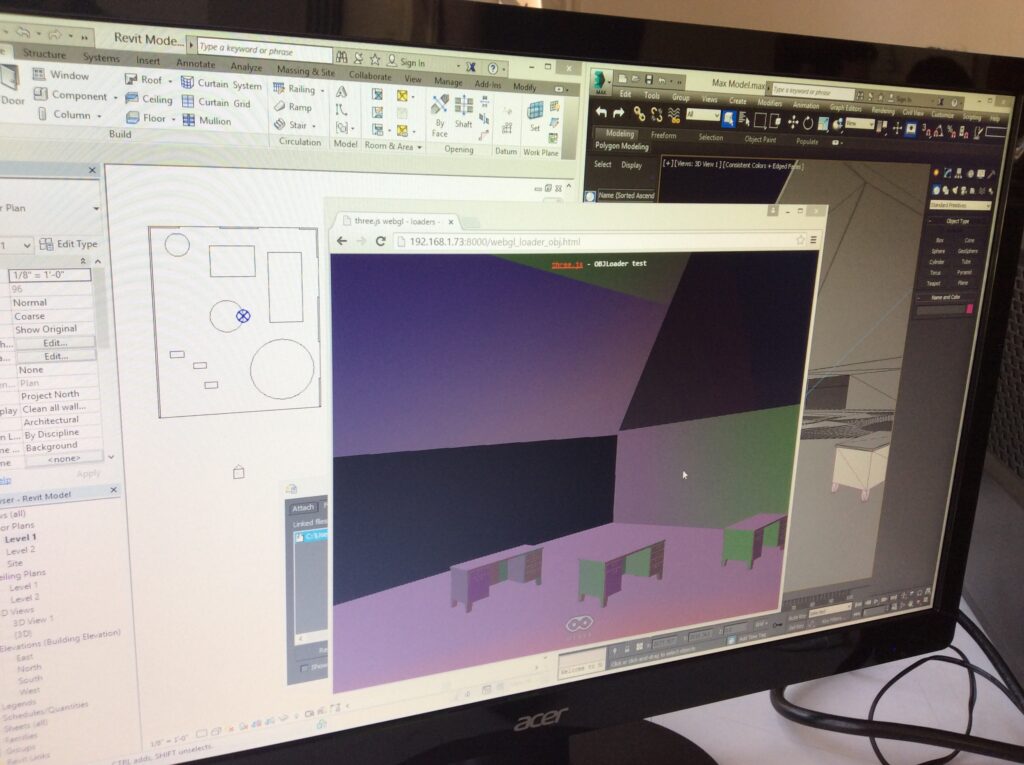

Using an Oculus Rift DK2, Revit, and open source we have linked the hardware, the design and the web. Using three.js and webgl and an OBJ file format we are able to view and move around using a url.

The client can simply open a browser link from an email, connect a future commercially available Heads Up Display (HUD) like Oculus Rift (launching next year) and see the design. “Can you change ‘this here’?” ask the client. Designer updates the design, saves and uploads the file, the browser refreshes and the client sees the change. They continue this process with the client using the same link. The url site can be accessed by anyone with the link and can be experienced by any number of people at the same time.

Can your solution be implemented on Monday?

Yes, this is mostly using existing tools and open source software.

How much of the code was built this weekend?

Because we’re mostly reusing lots of open source code, very little was built this weekend. However, all that was built by us Was built this weekend.

Technical difficulty?

Medium (Easy for the designer, medium challenge for developer). Using a very new web VR compatibility limited us to using the nightly build of Chromium, using a developer kit of a yet to be sold hardware Rift, and file sync system (in this case detopbox) created some challenges, but a simple understandable process.

Impressiveness?

Bonus: What open standards do you support or use?

We used OBJ open 3D file format, threeJS rendering in browser, and webVR open standards.

Bonus: Is your project open source? Yes, here it is on https://github.com/oderby/aec-hack-vrml

Here is us at AEC Hackathon 2.3 in the live demo on YouTube.